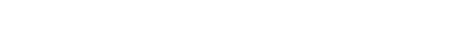

GPU Inference and Training Optimization

Slash your AI/ML infrastructure costs by 30–70% while boosting inference speed and training throughput with our expert GPU optimization—covering quantization, kernel fusion, right-sizing, and continuous monitoring across A100, H100, L40S, and other GPU families.

Comprehensive GPU Infrastructure Audit

Identify inefficiencies across your entire GPU stack—cloud instances, on-prem hardware, containers, models, and pipelines. We pinpoint bottlenecks in compute, memory, networking, and execution.

- GPU utilization & idle time analysis

- Memory bandwidth & bottleneck detection

- Multi-GPU & cluster efficiency profiling

Model Optimization & Quantization

Dramatically reduce model size and inference latency with advanced quantization techniques—INT8, INT4, mixed-precision—while maintaining accuracy. Faster serving, lower memory, smaller bills.

- Post-training & quantization-aware training

- Mixed-precision (FP16, BF16, INT8, INT4)

- Model pruning & distillation

Inference & Training Acceleration

Slash inference time and training cycles with kernel fusion, compiler optimizations, and efficient serving frameworks. Deploy vLLM, TensorRT, and custom kernels for maximum throughput.

- vLLM, TGI, TensorRT-LLM deployment

- Kernel fusion & custom CUDA kernels

- Flash Attention & PagedAttention

GPU Instance Right-Sizing

Match GPU hardware to workload requirements. We analyze A100, H100, L40S, RTX, MI300, and other architectures to recommend optimal instance families—balancing performance and cost.

- Instance family selection (A100, H100, L40S, etc.)

- Spot/preemptible GPU strategies

- Multi-cloud GPU cost comparison

Container & Pipeline Optimization

Streamline ML pipelines and containerized workloads for GPU efficiency. We optimize Docker images, Kubernetes scheduling, batch processing, and caching to maximize GPU utilization.

- Docker image optimization & layer caching

- Kubernetes GPU scheduling & node pools

- Batch inference & request batching

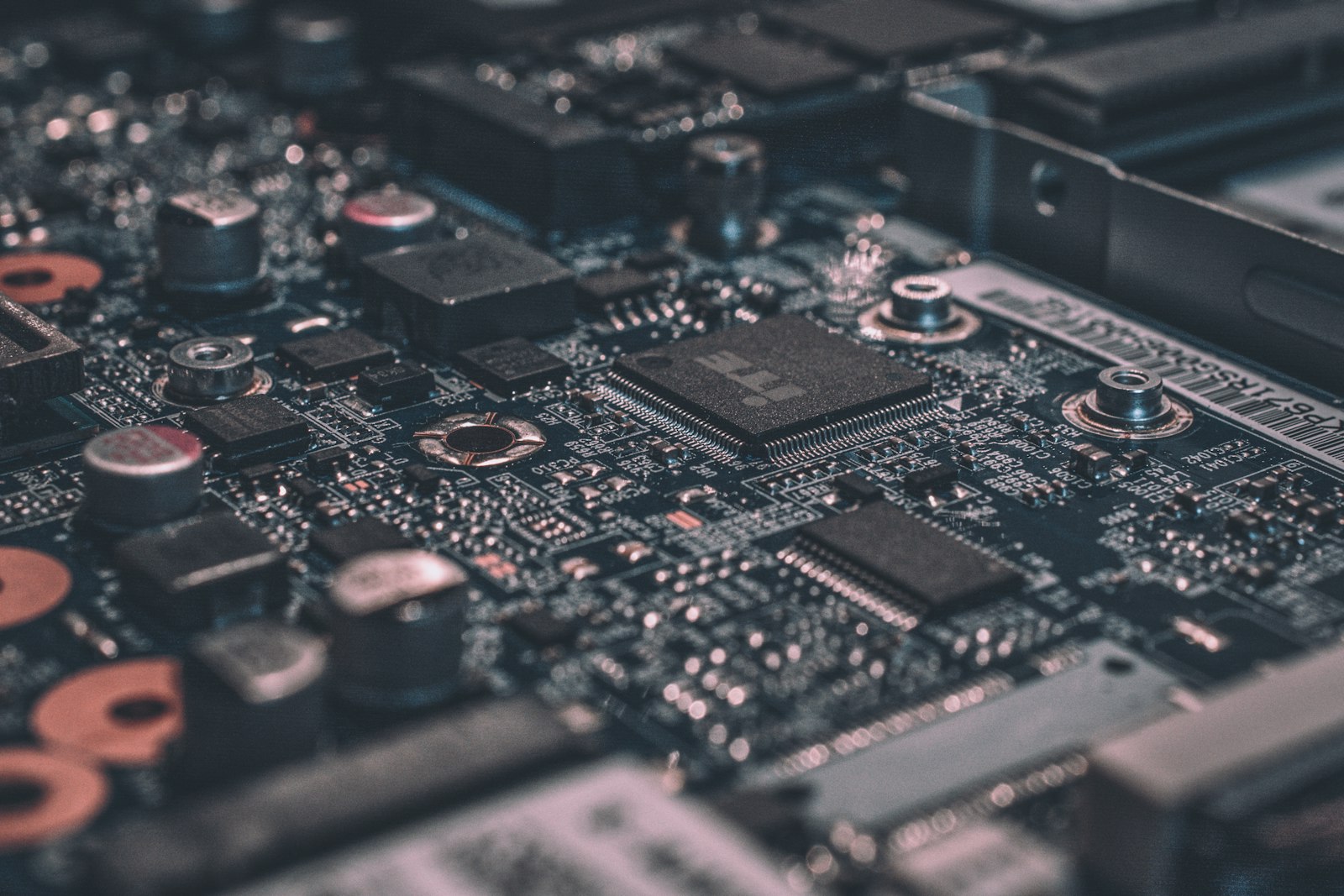

Monitoring & Continuous Optimization

Sustain 30–70% cost savings as workloads evolve. We provide real-time GPU metrics, performance dashboards, and proactive optimization recommendations to maintain peak efficiency.

- Real-time GPU utilization dashboards

- Cost per inference/training job tracking

- Automated performance regression detection